Using Seq.Input.MSSQL to read SQL Job Agent tables as logs!

Following on from my last post about using Seq.Input.MSSQL to read Endpoint Protection table entries as Seq logs, I wanted to share something that has really made a huge difference for us - turning the SQL Agent job tables into Seq logs!

This was actually the first full MSSQL Input integration that we went live with, and it turned out to be the most useful by far. When it comes to the SQL Agent jobs, you can't simply point Seq.Input.MSSQL at a table and have it come out with something that is readily translatable to a Seq log with structured logging - but you can make a view to do the necessary conversions.

When I originally did this piece - with the help of a whole bunch of Googling - I found that there was one particular flaw - Seq.Input.MSSQL assumed that a timestamp had milliseconds, but the Job Agent tables do not record milliseconds. The MSSQL Input always tracks the last time that a query was run, so that it doesn't do full table scans every time. In this case, that means that it was possible to 'miss' logs because the StatusDateTime was always at 0 milliseconds - but the SQL input had already gone past that time.

That was readily resolved by adding an inbuilt delay of 1 second to the input, which worked well. Later I made this configurable, because I also hit a different case where logs were added to a table only once per minute - which led to a similar type of problem that was resolvable by configuring a longer delay.

The below table does all the conversions to a timestamp and provides essentially everything useful that is available from the sysjobs, sysjobhistory, and sysjobsteps tables.

use msdbGOCREATE VIEW AgentJobs ASSELECTsjh.instance_id,@@servername as ServerName, DATEADD(SECOND,(run_duration/10000 * 60 * 60) + (run_duration/100%100 * 60) + (run_duration%100 ),dbo.agent_datetime(sjh.run_date, sjh.run_time)) StatusDateTime, dbo.agent_datetime(sjh.run_date, sjh.run_time) RunDateTime, STUFF(STUFF(RIGHT('00000' + CAST(run_duration AS VARCHAR(6)),6),3,0,':'),6,0,':') Duration, (run_duration/10000 * 60 * 60) + (run_duration/100%100 * 60) + (run_duration%100 ) DurationSecs, sj.name JobName, sjh.step_id StepId, ISNULL(sjs.step_name, 'Job Status') StepName, CASE sjh.run_statusWHEN 0 THEN 'Failed'WHEN 1 THEN 'Succeeded'WHEN 2 THEN 'Retry'WHEN 3 THEN 'Canceled'WHEN 4 THEN 'In Progress'END RunStatus, sjh.message AS StepMessageFROM dbo.sysjobs sjINNER JOIN dbo.sysjobhistory sjh ON sj.job_id = sjh.job_idLEFT OUTER JOIN dbo.sysjobsteps sjs ON sjh.job_id = sjs.job_id AND sjh.step_id = sjs.step_idWHERE DATEADD(SECOND,(run_duration/10000 * 60 * 60) + (run_duration/100%100 * 60) + (run_duration%100 ),dbo.agent_datetime(sjh.run_date, sjh.run_time)) > dateadd(dd,-1, convert(date,getdate()))GOCREATE LOGIN [DOMAIN\SEQSERVER$] FROM WINDOWSGOGRANT SELECT ON dbo.AgentJobs to [DOMAIN\SEQSERVER$]GO

And then all that's required is a Seq.Input.MSSQL instance to read in the view:

| Property | Value |

|---|---|

| Title | SERVERNAME |

| Refresh every x seconds | 5 |

| Server instance name | SERVERNAME |

| Initial catalog | msdb |

| Trusted Connection | Enabled |

| Table or view name | AgentJobs |

| Column name of TimeStamp | StatusDateTime |

| Column name of Message | StepMessage |

| Include following columns as property | ServerName,StatusDateTime,RunDateTime,Duration, DurationSecs,JobName,StepId,StepName,RunStatus,StepMessage |

| Log application name as property | AgentJobs |

| Event Level | 0 |

| Valid local time period |

That's it. Simple as that. And then all you need to expose your new logs is a signal, which could be as simple as a signal looking for "Application = 'AgentJobs'".

MSSQL Input will only log the Application property when it's logging a result from your query. We have an "Application Property Name" config which allows you to change the name of the property - I often use AppName in my Seq apps. Up to v1.2.0, if you didn't configure this, it would not log a property, but I've just put a pull request in that will change this to always log an Application property, defaulting to the title of your instance.

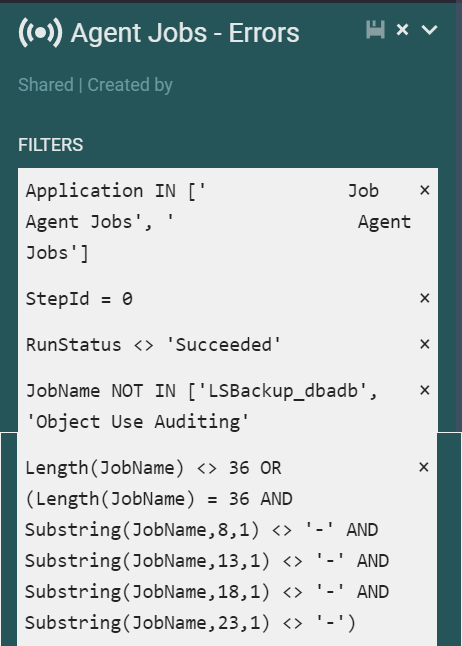

If you want to alert on errors, you might want to get even more specific with your signal. I found that a signal like the following is useful, since it allows excluding jobs that you don't want to alert, and also filters out jobs that only have a GUID as a name.

And from here, you can configure a Seq.App.Opsgenie or Seq.App.Atlassian.Jira instance to watch the signal and raise the appropriate alert or ticket, similar to my illustration for the last post on this.

I hope people find this useful - the SQL Agent job logs are a goldmine of information about your scheduled jobs, and getting them into Seq can be a game changer for monitoring and alerting!

Comments